ICICS houses multiple state-of-the-art research labs where faculty and grad students from a wide range of disciplines conduct advanced technologies research. Below we provide brief descriptions of this work, with links to lab websites for more information.

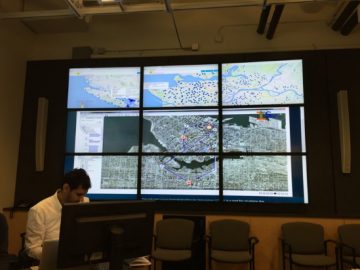

X009 Complex Systems Integration (CSI) Laboratory

The Complex Systems Integration (CSI) Laboratory at ICICS develops real-time tools to predict and respond to large disasters with high impact on human lives and the environment. We develop the full disaster preparation and response cycle: sensors, communications, very fast solutions and AI algorithms to determine the best response actions. Interdependencies are assessed among Critical Infrastructures in multivariable nonlinear systems that incorporate engineering, management, and human factors. Current and past projects include the Vancouver 2010 Winter Olympics, the CANARIE Disaster Response Network Enabled Platform (DR-NEP), the European Critical Infrastructures Preparedness and Resilience Research Network (CIPRNet), the Earthquake Early Warning 5G Network for the Smart City (Quake5G) with Rogers Communications, and the Wildfire Disaster Response Network (WFDRnet) also with Rogers Communication.

Top 3 research impacts:

- The Infrastructures Interdependencies Simulator i2SIM has been used in two disaster response networks in Europe: the MATRIX network for multi-hazard events and the CIPRNet network for critical infrastructures collaboration.

- The Earthquake Early Warning network for the smart city is the first of its kind to deploy a dense network of sensors in a city integrated with the 5G network to accurately predict damage to structures and take remedial actions based on the full waveform of the arriving earthquake waves.

- The Wildfire Disaster Response Network is the first of its kind to integrate real-time measurements of the fire path parameters, real-time prediction of fire spread, and how the responders’ actions interact with the fire propagation equations in real time.

Supervisors

José R. Martí & Carlos E. Ventura

X015 Collaborative Advanced Robotics and Intelligent Systems (CARIS) Laboratory

In the CARIS lab, researchers pursue world-class experimental research to advance the science of human-robot Interaction (HRI). Using advanced lab equipment spanning a Willow Garage PR2 personal robot (the only one in Canada), two Barret WAM 7DOF robot arms and grippers, a unique robotic virtual reality environment to study human balance, and a room-sized integrated force and motion capture system, among other advanced sensing, control and actuation equipment, the researchers examine and answer impactful and novel research questions in HRI.

Supervisors

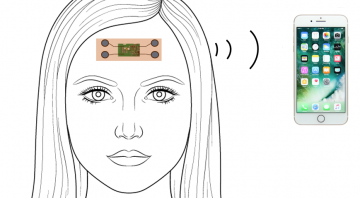

X015 Sensing in Biomechanical Processes Lab (SimPL)

The Sensing in Biomechanical Processes Lab (SimPL) is a research lab in the Mechanical Engineering Department at the University of British Columbia. At SimPL, we develop sensing, modeling, and data analytics technologies for human health applications. Specifically, we have target application areas of brain injury and sleep disorders. We have developed and applied wearable sensors to measure health parameters in these application areas, with the goal of gaining a deeper understanding of the mechanisms of these prevalent health conditions, which can be further translated into prevention, screening, and diagnostics tools.

Top 3 research impacts:

- We have developed wearable inertial sensors to measure head impact accelerations in sports and investigate the fundamental biomechanical mechanisms of concussions.

- We have found that even mild sports head impacts may lead to subtle and transient physiological changes in the brain.

- We are developing mobile brain body imaging (MoBI) systems for real-world measurements of human brain function and physical activity.

Supervisors

X027 Micro-Electro-Mechanical Systems (MEMS) Lab

The Micro-Electro-Mechanical Systems (MEMS) Lab conducts research in fabrication and packaging of micro-electro-mechanical systems for biological applications. The MEMS lab has been exploring new applications and methods of making miniature biomedical devices:

- Micro-optical scanner (Collaborator H. Zeng, BC Cancer)

- Remote-controlled drug delivery devices (Collaborators: H. Burt, J. Jackson and U. Hafeli, Pharmaceutical Sciences)

Supervisors

X210 Computer Vision Lab

The Computer Vision Lab is one of the most influential vision groups in the world. This group created RoboCup and the celebrated SIFT feature object recognition algorithm. Researchers in this lab focus on building algorithms for efficient perception of visual data in computers. They develop algorithms in the area of image understanding, video understanding, multimodal analysis, geometric visual understanding, human pose estimation, visual synthesis and understanding of sports videos using machine learning and deep learning techniques.

Supervisors

X221 Biomedical Engineering Research Lab

Faculty in the Biomedical Engineering research group use engineering techniques and technologies to address needs within the medical and healthcare industries. Opportunities for education and research exist in areas such as biomechanics, biomaterials, biochemical processing, cellular engineering, imaging, medical devices, micro-electro-mechanical implantable systems, physiological modeling, simulation, monitoring and control, as well as medical robotics.

Faculty in the Biomedical Engineering research group use engineering techniques and technologies to address needs within the medical and healthcare industries. Opportunities for education and research exist in areas such as biomechanics, biomaterials, biochemical processing, cellular engineering, imaging, medical devices, micro-electro-mechanical implantable systems, physiological modeling, simulation, monitoring and control, as well as medical robotics.

Supervisors

X227 Advanced Numerical Simulation ANSLab

The Advanced Numerical Simulation Laboratory specializes in developing techniques for numerical solution of problems in aerodynamics. In particular, researchers are working to take advantage of both the geometric flexibility of unstructured mesh methods and the accuracy benefits of high-order methods. Recent work has exploited Newton-GMRES techniques to develop extremely efficient, high-order accurate methods for inviscid (fluids with zero viscosity) compressible aerodynamics problems, including showing that high-order methods can achieve solutions of engineering accuracy more quickly than second-order methods.

Top 2 research impacts:

- Mesh generation algorithms developed in ANSLab have been incorporatedinto sector-leading commercial meshing software.

- Our work in using numerical simulation results to determine how tomodify the mesh to improve the solution is guiding a similar project at ANSYS.

Supervisors

X309 Digital Media Lab (DML)

The DML focuses on research and development in the areas of emerging video technologies with focus on video processing and compression, video broadcasting and streaming, for applications ranging from entertainment to training, education and digital health. The lab is host to the TELUS & UBC Digital Media Partnership, which addresses compression and transmission of the massive amount of data required in integrating emerging media formats such as HDR, AR/VR, 3600, Light Field, and Free Navigation. Working with partner TELUS, the DML develops techniques that will support compatibility with different networks and heterogeneous playback devices with different display capabilities.

Supervisors

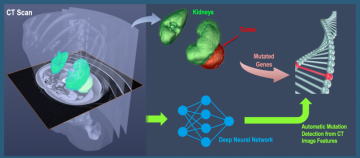

X315 Biomedical and Multimedia Signal Processing Lab; X421 Biomedical Signal and Image Computing Laboratory (BiSICL)

The shared objectives of the Biomedical and Multimedia Signal Processing Lab and BiSICL are to create and develop innovative techniques for computational processing, analysis, understanding, and visualization of biomedical data such as multi-modal radiological images and biological signals. These techniques are developed for application in clinically-focused, disease-specific manner.

Supervisors

Jane Z. Wang, Rafeef Garbi (née Abugharbieh)

X321 Data Communications Lab

The Data Communication lab is engaged in leading-edge research in many areas of communications and networking. Its focus is on the design and evaluation of architectures, algorithms, protocols, and management strategies for communication systems.

Supervisors

X327 Natural Language Processing (NLP) Lab

The Natural Language Processing (NLP) conducts research in deep learning and natural language processing. Its research program focuses on deep representation learning and natural language socio-pragmatics, with a goal to build `social’ machines for improved human health, safer social networking, and reduced information overload.

Supervisors

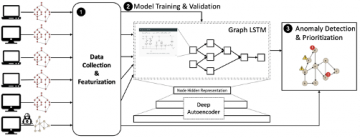

X409, X410, X415 Systopia: Networks, Systems and Security Lab

The Systopia Lab conducts research on a variety of topics, including operating systems, distributed systems, security, and program analysis. Systopia Lab is supported by a number of government and industrial sources, including Cisco Systems, the Communications Security Establishment Canada, Intel Research, the National Sciences and Engineering Research Council of Canada (NSERC), Network Appliance, the Office of the Privacy Commissioner of Canada, and the National Science Foundation (NSF).

The Systopia Lab conducts research on a variety of topics, including operating systems, distributed systems, security, and program analysis. Systopia Lab is supported by a number of government and industrial sources, including Cisco Systems, the Communications Security Establishment Canada, Intel Research, the National Sciences and Engineering Research Council of Canada (NSERC), Network Appliance, the Office of the Privacy Commissioner of Canada, and the National Science Foundation (NSF).

Supervisors

Aastha Mehta, Alan Wagner, Alex Summers, Ivan Beschastnikh, Margo Seltzer, Mike Feeley, Norm Hutchinson, Nguyen Phong Hoang

X427/X509 Human Communication Technologies (HCT) Lab

The Human Communication Technology (HCT) Research Laboratory researches a number of key issues that put people “back in the loop” and allow them to communicate experiences to computer systems and each other more effectively. Research topics include advanced video interfaces, mixed/augmented/virtual reality, biomechanical modeling of the human body, brain-computer interfaces, and interactive music and art using machine learning and statistical approaches.

The Human Communication Technology (HCT) Research Laboratory researches a number of key issues that put people “back in the loop” and allow them to communicate experiences to computer systems and each other more effectively. Research topics include advanced video interfaces, mixed/augmented/virtual reality, biomechanical modeling of the human body, brain-computer interfaces, and interactive music and art using machine learning and statistical approaches.

Recent work includes:

- To support our research on biomechanical modeling, we developed the only hybrid soft tissue/hard tissue simulation and modeling toolkit: ArtiSynth used by an international group of modelers. The lab has modeled different parts of the human body for surgical planning as well as articulatory speech synthesis.

- HCT researchers have been studying new forms of video-based learning with our advanced interface, ViDeX. This research explores uses of genAI technologies in Educational settings.

- HCT research are investigating new forms of voice expression through novel methods of brain signal and gesture to control speech and voice.

More information on all the projects at the lab and publications can be found at hct.ece.ubc.ca.

Supervisors

X508 Sensory Perception & Interaction Research Group (SPIN)

SPIN is an interdisciplinary group of researchers who design and build innovative physical, touch-based user interactions to solve real problems for real people. The investigators study and design for human perception and affect, considering both interface usage and the design process. They are concerned with what these interfaces will do, how they will work, the way they will feel, sound and look, and how users will perceive them.

Supervisors

X510 Robotics and Control Laboratory (RCL)

The Robotics and Control Laboratory (RCL) carries out research in medical image analysis, image-guided diagnosis and interventions, and telerobotic and robotic control of mobile machines and manipulators. Applications range from the integration of real-time imaging in image-guided therapy to new concepts for the control of large hydraulic mobile machines such as excavators and small medical telerobotic micromanipulators.

Supervisors

Purang Abolmaesumi, Robert Rohling, Tim Salcudean

X527 Sensorimotor Systems Lab

The mission of the Sensorimotor Systems Lab is to understand human movement by developing advanced computational models. The lab’s research is multi-disciplinary and spans computer graphics, scientific computing, computational mechanics, robotics, biomechanics, and the neural control of movement.

Supervisors

X704 Sound Studio

Faculty and students from the School of Music conduct research in the ICICS Sound Studio in sound spatialization, in gesture and movement tracking technologies, and in the development of onbody sensors for interactive music performances. This research results in international professional performances, conference presentations and publications, and professional commissions. The studio is equipped with specialized multichannel audio (normally 8 and 16 independent channels), digital editing facilities, a MIDI grand piano, and professional recording equipment.

Top 3 research impacts:

- Hamel’s Integrated Multimodal Score-following Environment (iMuse) combines camera-based gesture tracking with automated score-following in Hamel’s NoteAbility Pro music notation system, and is used in several interactive concert pieces involving audio and/or video. See http://debussy.music.ubc.ca/muset/imuse.html

- The Kinect Controlled Artistic Sensing System (KiCASS) tracks up to 26 joints on up to 6 performers, and supports web-based transmission of gesture data between performers worldwide. The data is used to control audio, video and/or lighting in performance. See https://www.youtube.com/watch?v=EpLqE7oQSpA

- Pritchard’s Tracking and Smart Textiles Environment (TaSTE) supports gesture and colour tracking using the KiCASS system, and also uses his Responsive User Body Suits (RUBS) with e-textile sensors for control of audio/video processing and lighting, as well as dance costumes with controllable lighting.

Supervisors

Robert Pritchard, Keith Hamel, Yota Kobayashi

X715 Oculomotor Lab

Research in the Oculomotor Lab is focused on the interaction between human perception, cognition, and eye movements. Studies conducted in the lab investigate how vision controls eye and hand movements, and how eye movements affect our visual perception. Using state-of-the-art video-based eye trackers and motion sensing, the lab targets basic oculomotor processes in healthy young observers, athletes with supernormal abilities, and patients with disorders that affect vision and movement.

Top 3 research impacts:

- Eye movements are a sensitive readout of human visual and cognitive processes.

- Human eye movements are indicators of neurological processes in health and disease, and can be used to document disease progression.

- Eye movements and eye-hand coordination are key skills for athletes in interceptive sports.

Supervisors

X725 Programming Languages for Artificial Intelligence (PLAI) Research Group

The aim pf the Programming Languages for Artificial Intelligence (PLAI) group is to go beyond Deep Learning techniques using applied probabilistic machine learning for real-world applications. In collaboration with partners from across industry and academia, they are developing production-quality, open-source software with applications in computational neuroscience, computer vision, robotics and AI. The tools developed are applied to problems ranging from neuroscience to particle physics to engineering manufacturing, while also pushing the limits of our fundamental knowledge in computer science and machine learning.

Top 3 research impacts:

- Developed a probabilistic programming system for simulator inversion and universal simulator approximation, with use cases demonstrated for particle physics, and composite material manufacturing

- Developed simpleCNAPS, the current state-of-the-art in fewshot learning for image classification

- Contributed to automation of machine learning through the DARPA Data-Driven Discovery of Models (D3M) program

Supervisors

ICICS Research Clusters

ICICS also supports research clusters in areas of established and emerging strength. We provide various combinations of seed funding, lab and office space, workshop/conference logistical and financial support, as well as administrative, grant-writing support, and communications support. This frees cluster faculty to focus on their core research activities.